Why Is AI So Good at Coding?

Or Is It?

Having begun my reflection on the impact of AI on the World of Code, I want next to consider why coding is one of those areas that AI is good at. And to engage with the question of whether it really is good at coding—or, maybe more importantly, good for coding.

Or Is It?

Before we look at why AI is so good at coding, we do have to answer the question, “Is it?” Is AI really good at coding? Because, right now, you’ll get all sorts of answers to that question, including that it’s not, even from programmers. Possibly my favourite take on AI not actually being that good at coding comes from a discussion amongst some of the programmers in my parish in which a study was cited that first got various groups to predict how much more efficient AI-assisted programmers would be, garnering predicted increases in efficiency ranging from 30-50% from economics experts down to 20-30% from actual developers, which then found that AI assistance actually resulted in either no gains in productivity all the way down to a 40% decrease in efficiency!

The basic problem with this study is actually in the title: “Measuring the Impact of Early-2025 AI on Experienced Open-Source Developer Productivity” (emphasis mine). AI has gotten significantly better at coding since early 2025, with Gemini 3’s late 2025 release being easily able to create a fully functional and rather fun Rogue clone with pretty minimal guidance from me.

More significantly, from my perspective as a programming teacher, AI is now easily able to “one shot” every one of my classic teaching assignments, even the more advanced ones, producing results comparable to the extended efforts of the best of my student programmers (like this result, implemented here, using only the code and instructions in the first response). And, as a final bit of proof, one of the leading creative programmers in my parish had this to say about what is now being referred to as “agentic coding” or “agentic engineering”:

I must admit, with Opus 4.6, I am completely sold on AI agents taking over coding now. I don’t even review lines of code anymore. After having it build a feature, I just send a command to run four agents in parallel to assess my recent changes for problems spanning:

- Code reusability

- Security

- Performance

- Logical Coherence & Unit Testing

That way, it can build an entire feature, then I ask it to give me a report on those issues, which I can choose to fix/defer/etc.

I lead the overall design, the structure and organization, and guidelines around the code, but for individual lines of code, I barely review them now. I mostly just oversee since the agents lose context and will tend to duplicate code, or introduce security issues by sending too much data to the client, so I suppose I kind of know where to look.

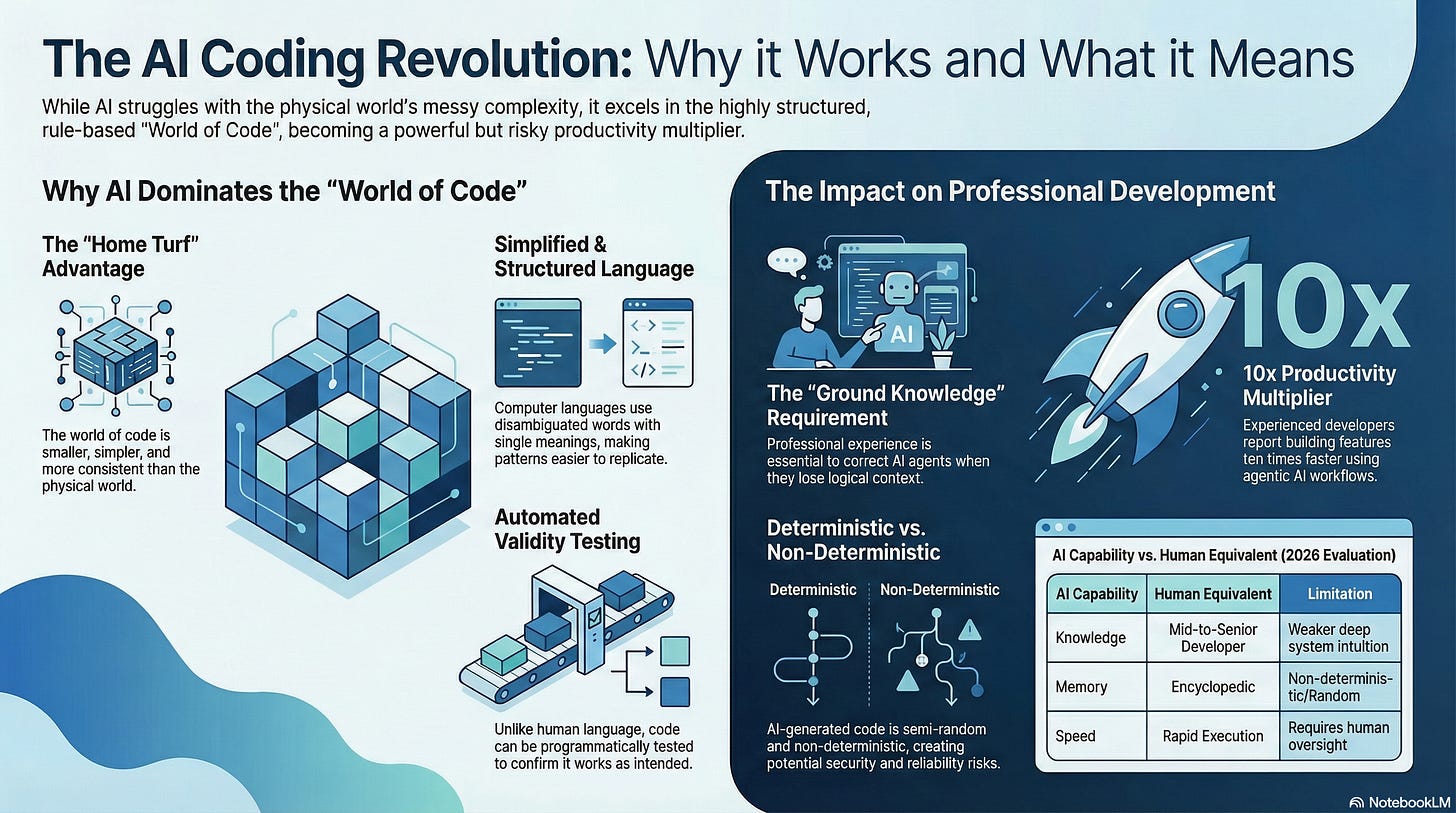

What does that mean for learning to code? I’m not sure. I think I greatly benefit from my decade of professional coding/architecture experience, and this is what allows me to make the most of these tools. If I didn’t have the ground knowledge I wouldn’t have an intuition for correcting the agents when they go off track.

But I genuinely am building at least 10x as quickly as I did a year ago without quality loss through these new ways of building.

So, I think we can safely say that AI has gotten very good at coding. ChatGPT evaluates AI’s current coding abilities (as of early 2026) as follows:

AI is roughly equivalent to:

a fast, knowledgeable mid-to-senior developer

with encyclopedic memory

but weaker deep system intuition

It dramatically amplifies top programmers—but does not fully replace them.

While ChatGPT could of course be biased, or even hallucinating, here, its evaluation more or less aligns with what I’ve heard from other programmers who are using AI extensively now.

Why So Good?

So, given that large language model AIs can still struggle with simple things like how many “r”s are in the word “raspberry” or even whether a “quick brown table” is likely to exist, how on earth did it somehow get so good at programming?

To answer this, we need to get back to how AI interacts with the real world, as opposed to the “world of code”, and to the difference between natural human languages and the programming languages we’ve created to control computers.

Home Turf Advantage (World Models)

First, as Gary Marcus has pointed out repeatedly (as in this piece from last summer), generative AIs lack a consistent world model. LLM AIs typically derive all their “knowledge” of the world around them from semi-randomly selected subsets of the most common patterns they’ve detected in analyzing humans’ usage of language, tweaked by whatever additional patterns we’ve given them, whether that be pictures, videos, documents, prompts, or even the thumbs-up/thumbs-down feedback that AI chatbots collect from us. The result is always a partial and incomplete and therefore inconsistent “world model”, which means that when considering possible completions of the phrase “the quick brown…” from a linguistic standpoint, the AI remains blithely unaware of the physics that would make a “quick brown table” more or less an impossibility (barring the rather unlikely invention of a jet-powered table)!1

By contrast, when LLM AIs are dealing with computer code, they are more or less on their “home turf”, and are dealing with a vastly simplified and very well-documented subset of inputs and outputs and operations. As a result, the “world of code” world models they induce from their training, while still inconsistent and incomplete, are significantly smaller, so “scaling” (training on more data) and larger “context windows” (holding more information to consider as context for the problem at any given time) tend to be less inconsistent and incomplete than their world models of the vastly more complex real-world, resulting in much more useful and reliable results. Here we might compare the games tic-tac-toe to checkers to chess to Go, each of which is significantly more complicated than its predecessor in the list, so that these games “fell” to various programmed AIs in the order presented as computers became more powerful—that is, faster (and thus able to calculate possible moves more rapidly) with more memory (able to store and thus consider more possible outcomes). Compared to the complexity of the real world, the “world of code” is on the level of tic-tac-toe—or maybe checkers.

Computer Code Is Simplified and Highly Structured Language

This same significant difference in levels of complexity applies to computer languages themselves, as compared to natural languages. As I’ve mentioned a number of times in my World of Code series already, computer languages are deliberately disambiguated: where the word “set” has literally hundreds of different meanings in English, if the word is used in a computer language, it will generally only have one. In my beloved TRS-80 Color Computer BASIC, for example, the word “set” is used only to SET a pixel on the screen, specified by x and y coordinates to a specific colour, c, using the syntax SET(x, y, c). This radical reduction in the range of possible meanings makes it much easier for LLM AIs to accurately analyze and reproduce these patterns, and, when you combine this with the fact that computer code works like the parts of a machine, reusing common command sequences to achieve clearly defined goals, these repeated, simplified patterns become far easier to store and reproduce and to correlate with predictable results than anything we see in human language.

Think about it this way. If we were to train two LLM AIs using the exact same amount of training data, one on human language and the other on a computer language, the computer language would not only have far fewer words than the human language, but each of those words would have a single distinct meaning. Additionally, there would be far more repeated patterns in the computer language training data than the human training data—and the descriptive target for the computer language would always be some variation on controlling computers, as opposed to the human language’s description of any and all aspects not only of the “real world” but also the interior world of human emotion and the almost unlimited fictional world of the imagination. It should be instantly clear which of the two presents a wider range of meaning, even without the additional complexity of the multiplication of possible meanings due to the ambiguities inherent in human language—and the relationship between patterns in a language with a narrower range of meaning with more repeated patterns will be easier to store and analyze in their entirety, making it easier to statistically identify and reproduce appropriate (meaningful/effective) patterns from the smaller and tighter language’s dataset.

High-Quality Training Data

It’s worth noting here that we do not actually train LLM AIs on computer code separately, for the very good reason that training these models on the very extensive and very high-quality combined body of “open source” code and its corresponding documentation provides us with a “Rosetta stone” effect, helping LLM AIs to understand what we want when we provide it with natural-language prompts asking it to generate computer code: since we’ve already provided the AI with natural language descriptions of what the code does in the documentation, it can “leverage” these naturally associated patterns to come up with code that does what you’ve asked it to do, reproducing patterns of code that correlate with your natural-language prompt in ways that closely parallel the correlations it has already catalogued between code and its corresponding comments and design- and program-documentation.

Code Can Be Tested

Another advantage that computer code has over natural human language is that it’s validity and/or effectiveness can be much more directly and more easily tested. Code either runs or it doesn’t, while even what look like “nonsense” syllables can have a powerfully evocative meaning in natural human languages (for example, “’Twas brillig, and the slithy toves…”). More than that, the output from generated code can also be evaluated programmatically—I’m thinking here of the “self marking” assignments in the CodeHS course that I teach, which can test not only if the program runs, but also if the output it generates corresponds to what is expected—and this allows AIs that generate code to automatically test the code they generate to see if it will actually produce what the user has asked for in the prompt. This feature of computer code also allows for automated “reinforcement learning” to be used in the training of code-generation AIs in ways that would be impossible to test when it comes to natural-language generation.

Compare, for example, the feedback an AI might be able to get from my query about the relative frequency of words that might follow “the quick brown…” vs. a request to generate a JavaScript program that adds the numbers 1 to 10 together, which should produce a total of 55. The best feedback it could receive in the first case might be a follow-up comment or correction from me in the chat, which will probably, at best, address only one aspect of the natural-language content that it has generated, whereas if the code that it generates does not produce a result of “55” when run, the AI can immediately identify that there’s a problem with the generated code. While these examples do not perfectly line up with how AI training actually takes place, the contrast here is at least illustrative of the radical difference between the two problems.

Average Code Is Acceptable

Finally, while this may be the least important factor in all this, it is worth noting, I think, that the content generated by LLM AIs naturally follows the law of averages, since it is simply a semi-random statistical continuation of the user’s prompt that is significantly likely, based on the model’s analysis of the linguistic patterns, to be meaningful. The semi-random factor (temperature) that we introduce in order to ensure different and “creative” results is one of the factors that naturally produces hallucinations, especially in the absence of a complete and consistent world-model. These hallucinations naturally occur in AIs used for coding, just as they do when we use AIs to generate natural language outputs, but they can be filtered out and corrected, to some extent, especially as “agentic” AI models are given the task of testing code and correcting any identified errors and incorrect outputs. The resulting code will generally be average rather than exceptional in quality, but average code that works is acceptable—and, in some cases, even preferable to exceptional code that other programmers may not be able to understand.

Good for Coding?

OK, so generative AI is now good at coding. The next question, and the one which interests me even more, is, now that AI is good at coding, whether this situation is good for coding. This is a much more complicated question, as it immediately reengages with the much higher level of complexity that is the real world, and where exactly this is going and what effects it may have is not yet entirely clear.

I will freely admit that I was caught off-guard by how quickly AI has become this good at coding—I was actually unsure that AI would even get to this level, which is one of the reasons I first wanted to establish how and why it did so. But we’ve actually already got “headless”/“dark” software factories in which software developers (or “agentic engineers”) set up complex development prompts and testing frameworks and then let AI agents develop and test without any significant further human intervention—and then ship the results without even reviewing the AI-generated code.

The first and most obvious question is what will the human impact of this be? This will clearly have a significant impact on software developers, and it’s already having an (outsized?) impact on software company stock prices as the market reacts to the idea that anyone with access to good generative AI might now be able to get AI to make their own software for them. Junior developer positions are disappearing, and, as a tech teacher, I’m having to massively rethink how and what I teach my students. These impacts, combined with natural saboteur- and luddite-style responses to having AI effectively foisted on us, make calculating the human impact of this first potential AI-replacement of human beings largely negative and deeply disturbing—but, on some level, all this, while obviously important and relevant, doesn’t directly address the question of whether or not AI is good for coding.

Even this narrower question is hard to answer, however, so I’ll divide my attempt at an answer into three parts: yes, no, and we’ll have to wait and see.

Yes

AI-generation of code is rapid and now relatively reliable and is becoming more accessible. While the ones who use AI to generate code and who are most likely to get the best results in prompting AI to generate code are largely programmers, non-programmers can now get pretty good results, making custom AI-assisted and -generated code more accessible than it has ever been before. In this, AI follows a familiar pattern: as computers have become more powerful and more complex, we’ve seen a steady stream of abstraction layers developed (high-level languages, libraries, IDEs, SDKs) that are designed to make programming easier for and more accessible to more people. Per-programmer productivity can, in fact, become higher, and AI-generated code is making it easier for more people to harness more computing power to get their computing devices to do what they want them to do.

No

At the same time, even apart from the above mentioned-in-passing huge and hurtful human impact, AI-generated code is significantly different from the ease-of-use abstractions we’ve developed before. While it’s potentially way more accessible (who wouldn’t like to just describe to their computer, in natural language, the program they want to have that doesn’t exist yet and have the computer make it for them?), it’s also non-deterministic—that is, since generative AIs are naturally semi-random, we don’t always know what they’ll produce in response to our queries… as opposed to previous abstractions like high-level languages (such as BASIC or Python), which act in deterministic and predictable manners, always generating the same low-level (machine language) computer code for every English-like command we provided. This leads to all sorts of potential problems—like the hundreds of security flaws that plagued the AI-generated AI-assistant OpenClaw, which included “one shot” AI takeovers of poorly configured OpenClaw instances, or inexperienced AI-assisted robotics programmers routinely blowing up their equipment as they blindly follow the advice of the real-world-model-deficient AIs—and these sorts of problems can only get worse and more widespread as non-programmers entrust their software development dreams to AI without any understanding of what’s going on behind the scenes.

Even for experienced programmers, as huge and complex programs are generated by AI, reviewing the code in such programs (assuming it’s human-reviewed at all) becomes difficult, if not impossible, without the assistance of AI.2 Given not only AI’s tendency to hallucinate and/or misunderstand, and the fact that it just doesn’t think the way we do, this seems like a potential recipe for disaster, especially as we entrust more and more aspects of our lives, thoughts, and essential digital services to computers controlled by code that is AI-generated and then AI-reviewed. Especially as the rise of AI and AI-coding has just made it that much harder to justify investing a significant portion of one’s life into learning to code or even to read and understand code when it’s so much easier to just get AI to do it all for us.

We’ll Have to Wait and See

Given that the unknowns in this situation range from questions of how much better AI is going to be able to get at coding, to how widely and to what systems AI-generated code will be deployed, to the as-yet-to-be-determined human impact AI coding will have, it really remains to be seen what the ultimate effect of AI coding will be. Will it be a utopia where AI simply creates for us whatever program we need to get our computers to do whatever we want them to do whenever we want it? Or will it be a nightmare made up of the AI equivalent of “spaghetti code” running Terminator-style drones that will take humans out as inefficient threats to AI’s existence that need to be simply eliminated?3

Given my own personal experience of the world of code, of Sci-Fi, and of how human beings interact with both the world of code and the “real world”, I suspect the ultimate end-point will lie somewhere in-between these two utopic and dystopic options—but I think our chances of arriving closer to the utopian option will be greatly increased if we continue to engage thoughtfully not only with AI but also with the “world of code” in which both AI and we now live and move and breathe and have our being.

When I asked ChatGPT to review this essay for me, asking it to look, in particular, for any inaccuracies, I not only ended up disagreeing with every one of its critiques, but it also produced this gem which I can’t help but share here:

“Quick brown table” being “more or less an impossibility” is just… not true

A “quick brown table” is unusual, but not physically impossible (a table on wheels, a “quick” brand name, metaphorical usage, etc.). This example weakens your “no world model” point because readers can poke holes in it.

If you want a tighter example, use something like:

basic physical constraints (e.g., “a square circle”)

or contradictions (“a married bachelor”)

Those are clearer impossibilities.

That being said, the ability to use AI to review code can also be considered a positive, as even human-generated code-bases in some complex projects have become so large that it has become increasingly difficult for humans to review all the code.

I should note that I also hold out hope, in this dystopic scenario, that the natural brilliance of our best human programmers will mean that we’ll be able to take advantage of the AI’s abysmally average code to wrest back control of the Terminator drones from the AI.

computing languages have steadily wrapped more and more of the consideration. Memory management, for instance. In C++, you have to allocate it and free it. In C#, the language does it.

Thank you for the spot on article. There are a number of qualified and credible programmers that can attest to the validity of your article. You may have read Jason Lang’s post on X https://x.com/curi0usjack/status/2024184571974000984?s=46 and while it’s more about bemoaning that laptop classes place in life now that their jobs are on the chopping block, it does nicely coincides to the issues you speak to.

I am only a hobbyist geek, but it is undeniable, the power of just getting a working script, disentangling a hopeless project, or having real actionable advice to questions that, not too long ago, required attending the monthly Linux user group, a meetup, or trolling developers on IRC, discord, or stack overflow, materialize in mere seconds.